OpenAI and ChatGPT are the new cool kids on the block, so let’s have a look how startups and software development companies can leverage them to create cutting-edge applications. OpenAI is already available as a managed service on Azure and ChatGPT is coming soon.

In this post we’ll have a look at:

- What is OpenAI

- Dall-E

- GPT-3

- ChatGPT

- The 4 GPT-3 language models that startups can use

- The Codex models

- Potential Use Cases for Startups (overall and per language model)

- What apps are startups already developing with OpenAI

- Open AI on Azure: How to get access to it, integrations, advantages and pricing models + cost considerations

- Open AI competition

- Limitations of OpenAI

- Resources and further information

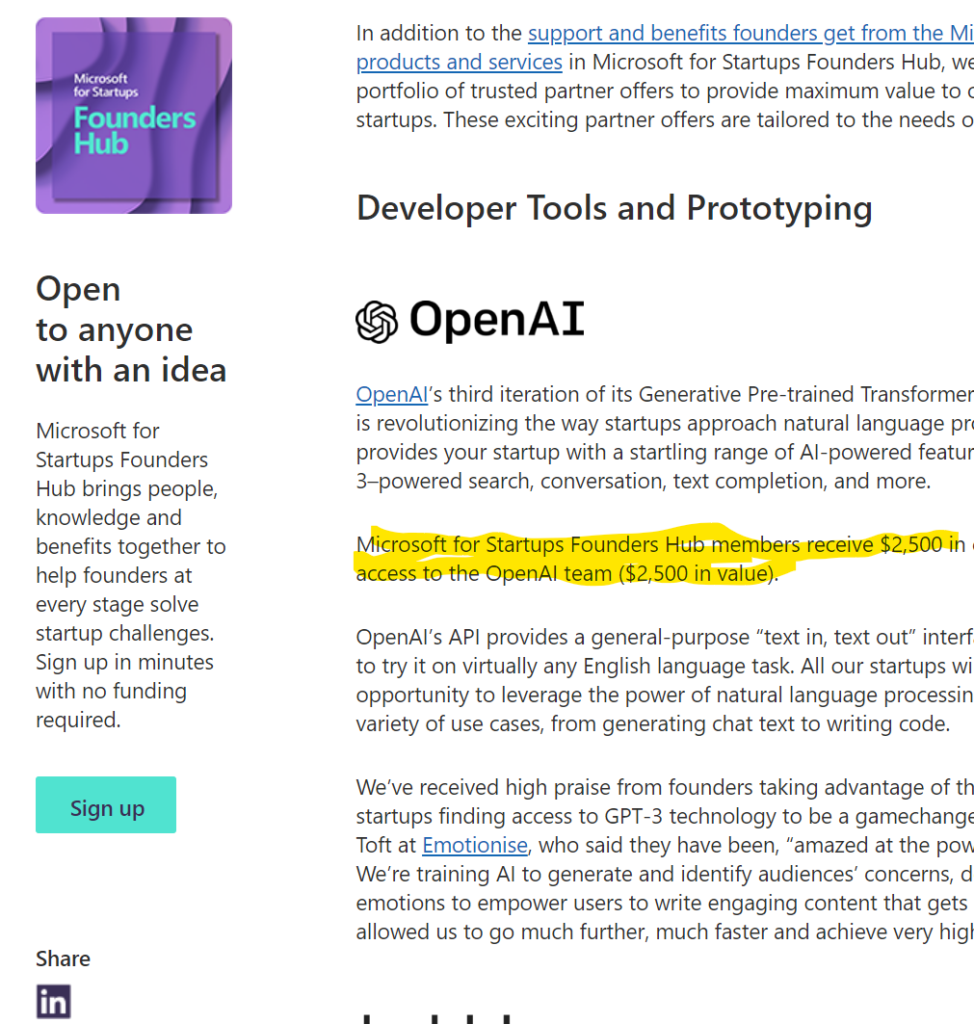

(Note: If you are a startup, you can get for free $2.500 USD credits to OpenAI, through applying to the Microsoft Foundershub program).

Introduction to OpenAI, DallE, GPT3 and ChatGPT: Capabilities and Potential use cases For new Apps

OpenAI is a research company that was founded in 2015, in San Francisco, by Elon Musk, Sam Altman (former president of YCombinator), Peter Thiel and others, with the goal of developing and promoting friendly AI in order to benefit humanity as a whole. The initial funding was $1billion USD and Microsoft invested an additional $1B USD in 2019.

OpenAI has released 3 AI solutions that have become super popular in the past 12-24 months: GPT-3, Dall-E and ChatGPT.

GPT-3

GPT-3 (Generative Pre-training Transformer 3), is a language model trained on trillions of words on the internet. It can generate human-like text, translate text, summarize text and answer questions. It can write poems, science fiction, and create chatbots and virtual assistants that can hold natural conversations with humans.

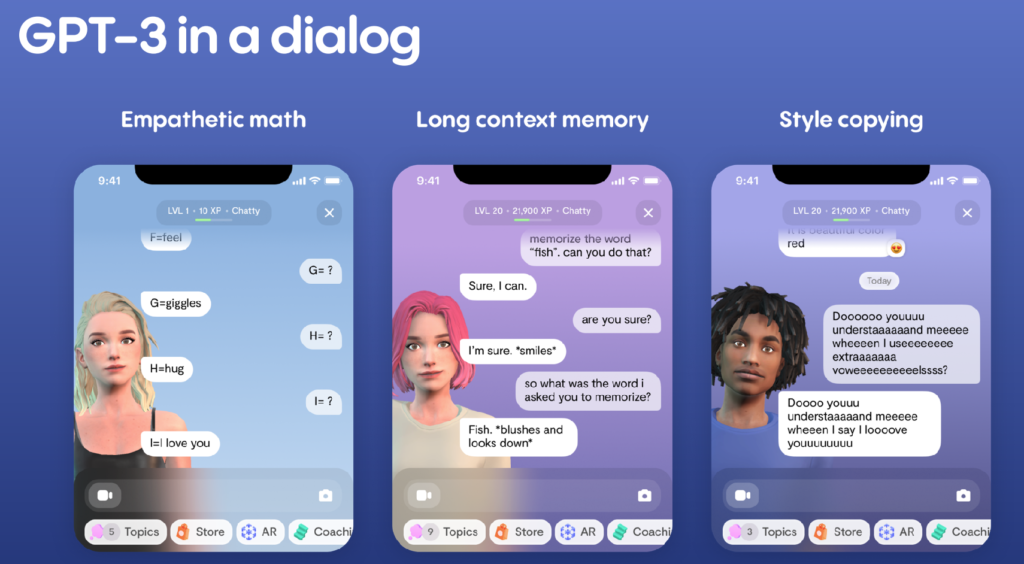

GPT-3 in a dialog can do empathetic math, keep the context and “remember” and copy your style of communication. Here are three examples, from startup Replika presentation (link) that explain these three capabilities with 3 neat examples.

Dall-E

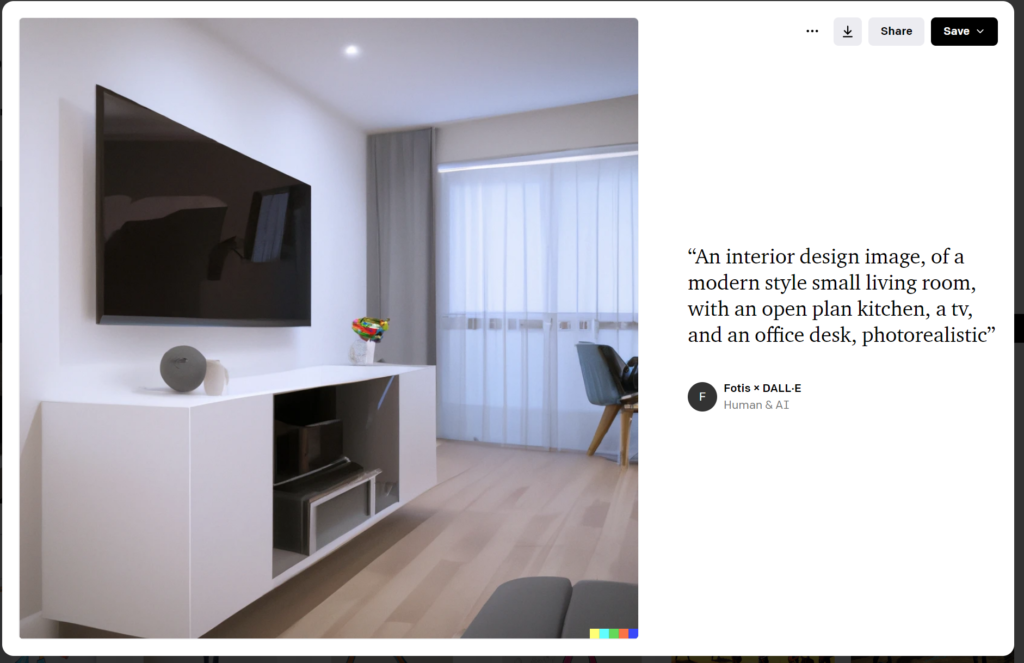

Dall-E is a deep learning model, that can generate digital images from a text. It can also create “similar” images to an image you give it, or it can “continue and extend” your images.

In the below example, I asked from Dall-E to create the interior design for a small living room. It didn’t get it 100% correct but it wasn’t too bad for a start.

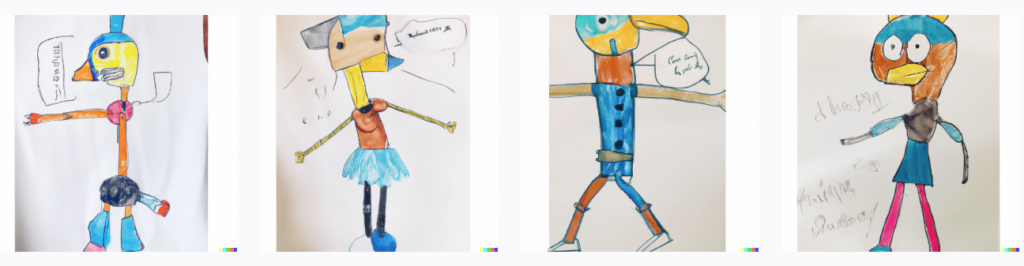

One of the following 4 paintings belongs to my 6-years young daughter. I gave it as input to Dall-E and it created the other three paintings, based on my daughter’s style. My daughter couldn’t actually identify which one was hers, when I asked her after a couple of weeks have passed.

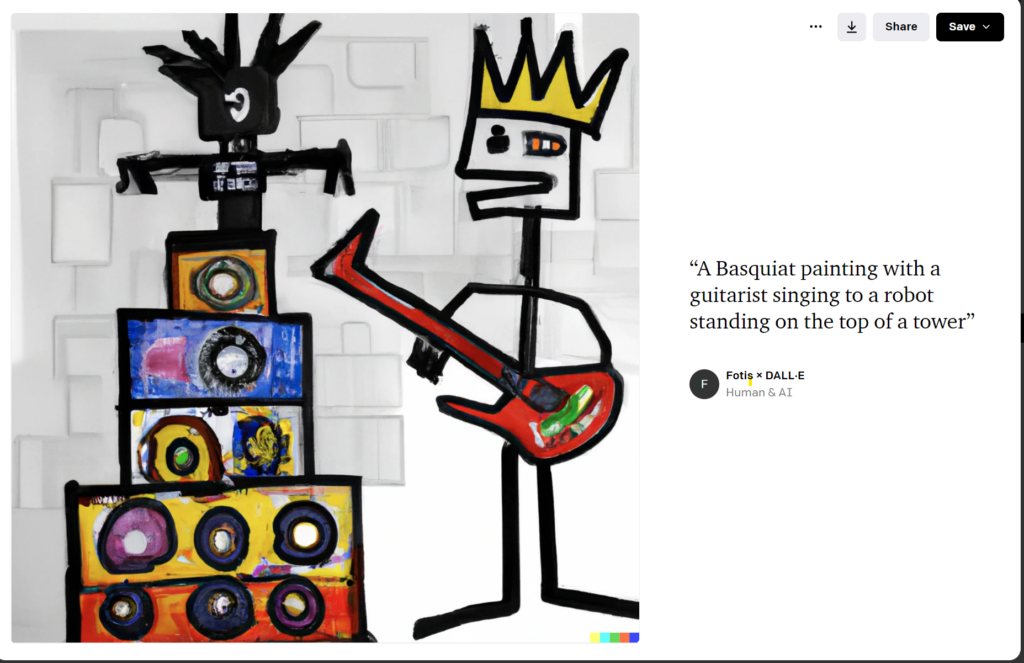

And getting more creative, I asked from Dall-e to act as if it was the famous painter Basquiat, and paint the below for me. I could see this as a painting hanging in a room..

Tip for Music Lovers: I am more into creating music and there is an open source AI project for auto-creating loops from text prompts: Try it at https://www.riffusion.com – I’m very much looking forward to the era when AI will be helping us writing Cubase songs with just text prompts such as “Write a drums midi rhythm in Cubase, similar to the intro from Paradise city, and add a bass line in A minor, with the funky sound of bass of RHCP“

But enough with the artistic endeavors; let’s get back to business. Let’s have a look at ChatGPT.

ChatGPT

ChatGPT is the conversational version of GPT-3 that can be used to create chatbots, voice assistants, and other conversational AI applications. It can have as an output text, or even code (e.g. a python script).

Please note that ChatGPT is coming soon to Azure (announcement from Satya Nadella on January 16 2023)

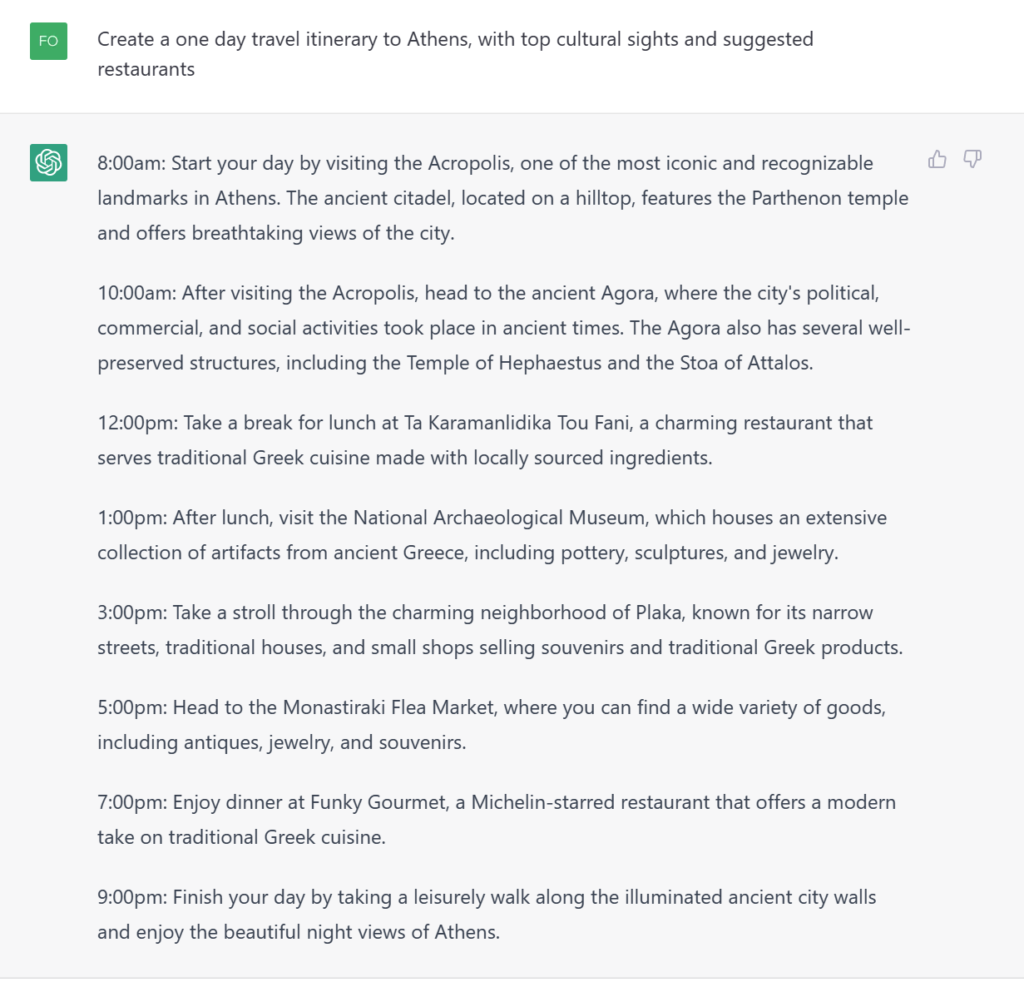

If you have played with ChatGPT, just skip this section, otherwise here are a few examples of what it can do:

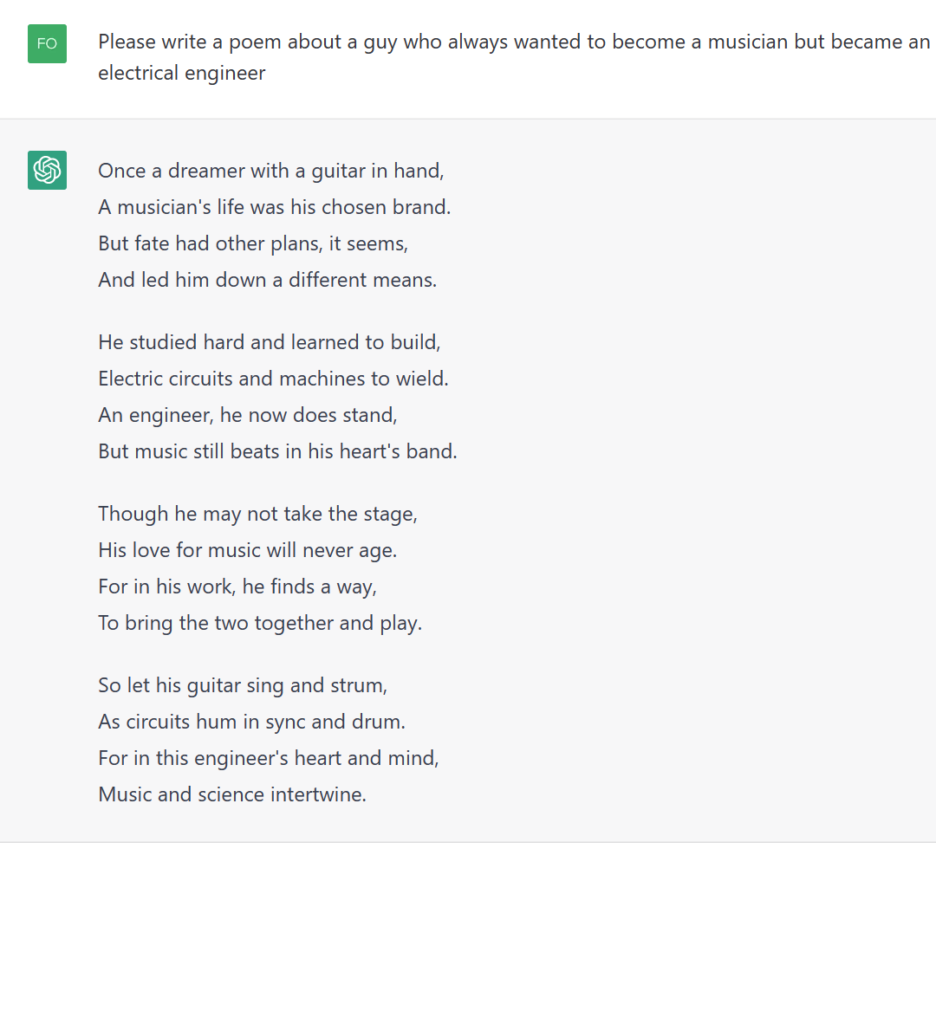

Can ChatGPT create a poem dedicated to all of us musicians trapped in the body of an electrical engineer?

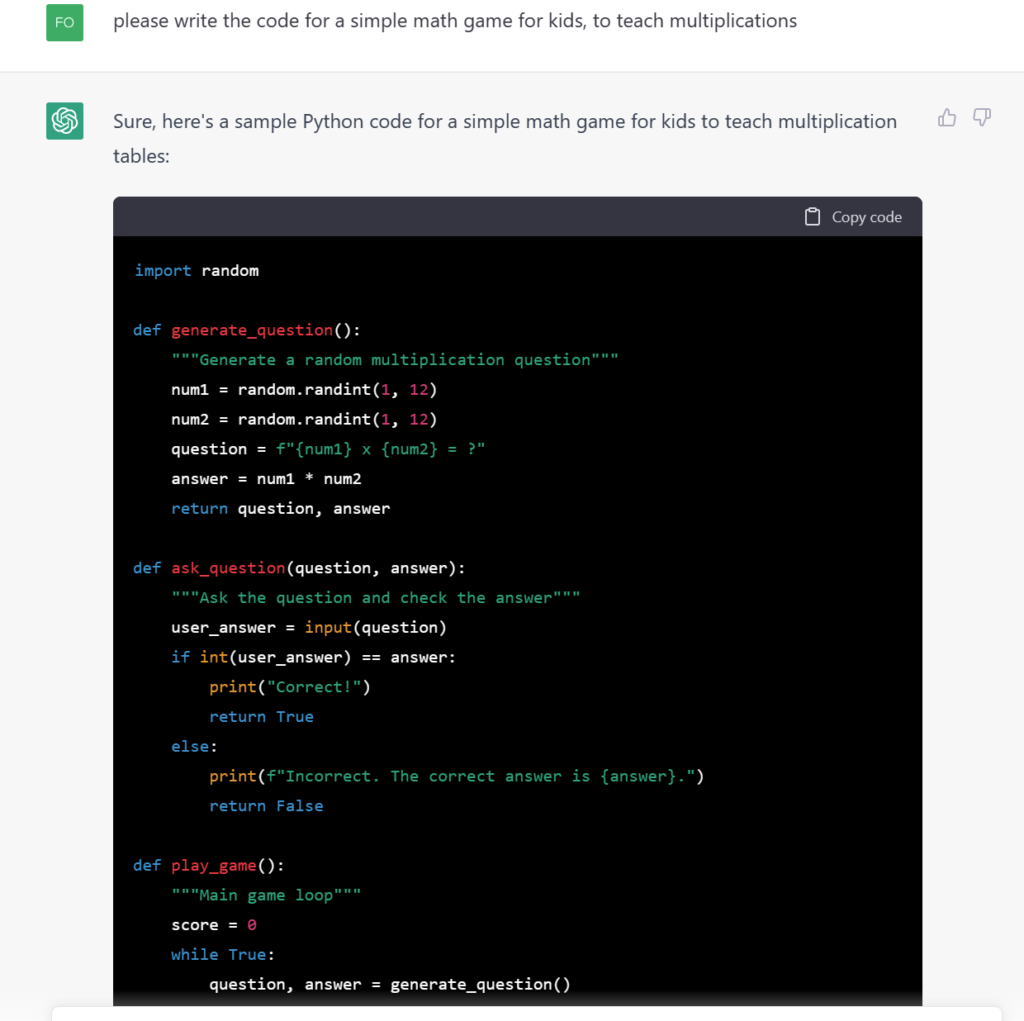

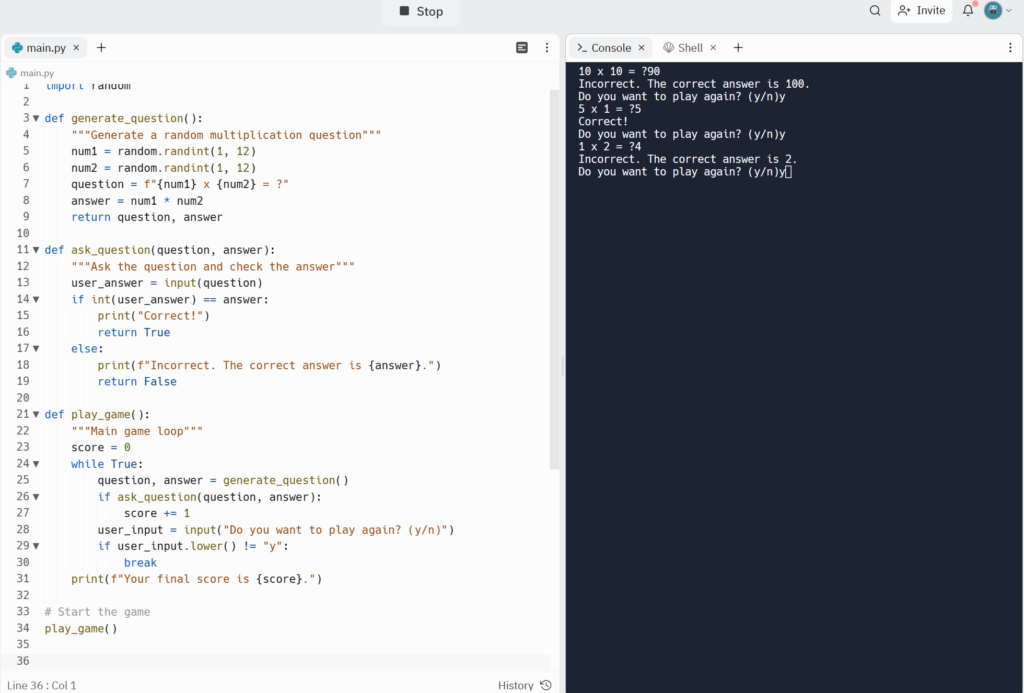

Then I ask from ChatGPT to write the code for a simple education game, I can give to my daughter to help her learn multiplication tables. Here is what I got as a code:

I tried the code in Repl.it to see if it actually works, and indeed it works as expected:

Obviously, you should not blindly trust the code produced; since I am not a developer, I asked my brother (who is a ninja-guru dev) to make a few tests of the produced code on text I would write (he would compile it just by seeing it..pure magic to my eyes), and he agreed that it’s in a pretty good shape for some general purpose code (obviously far from substituting the work of devs but pretty good to save them a few hours per week).

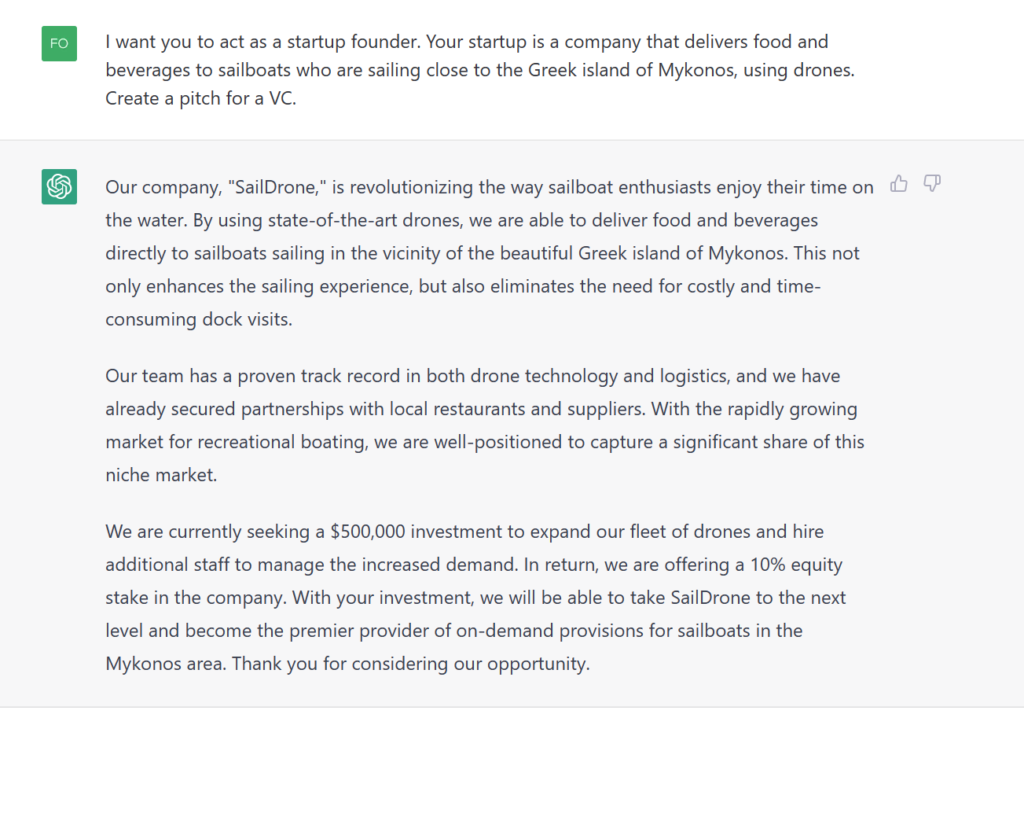

Going back to the capabilities of ChatGPT I asked from it to create a VC pitch for a startup idea I gave to it as input: A drone delivery service for sailboats outside of Mykonos island in Greece.

Well..it even got a nice name for my startup “Saildrone”..here is the pitch

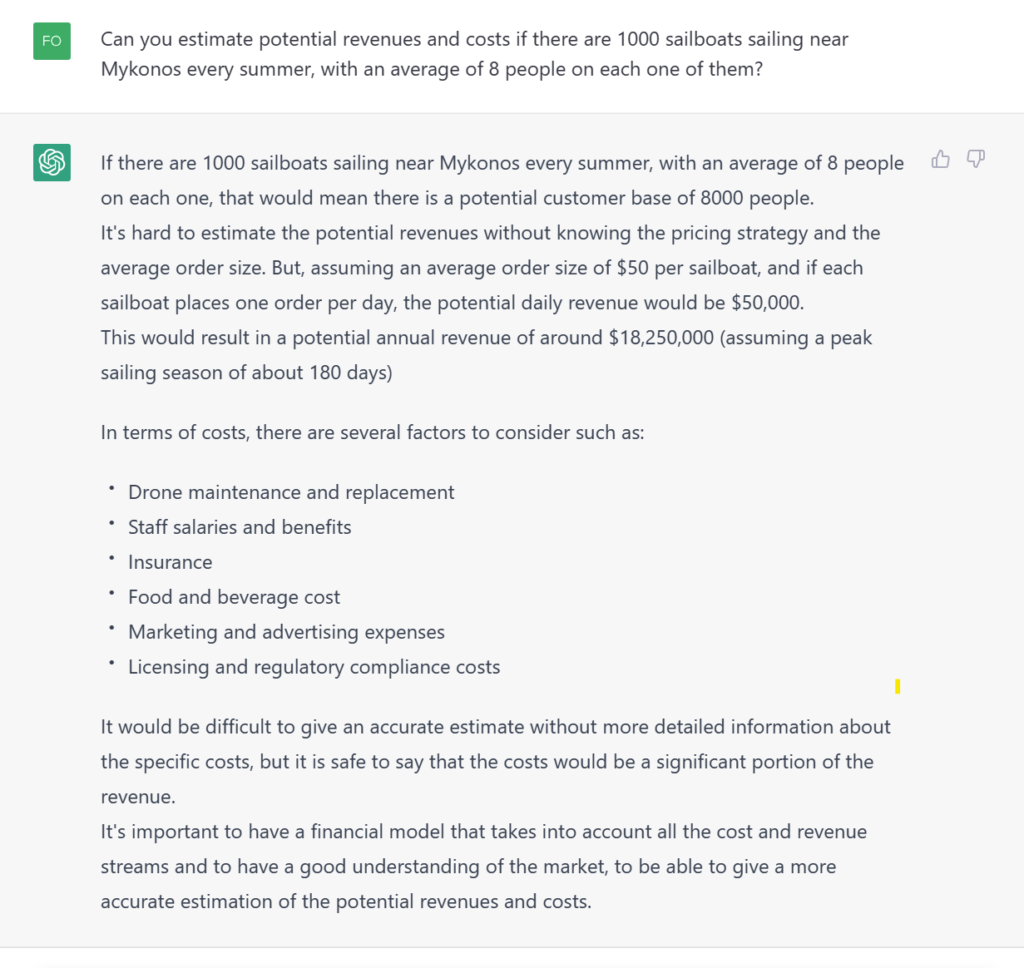

ChatGPT remembers the context of the previous questions and builds on that knowledge. Here is a follow-up questions and its answer:

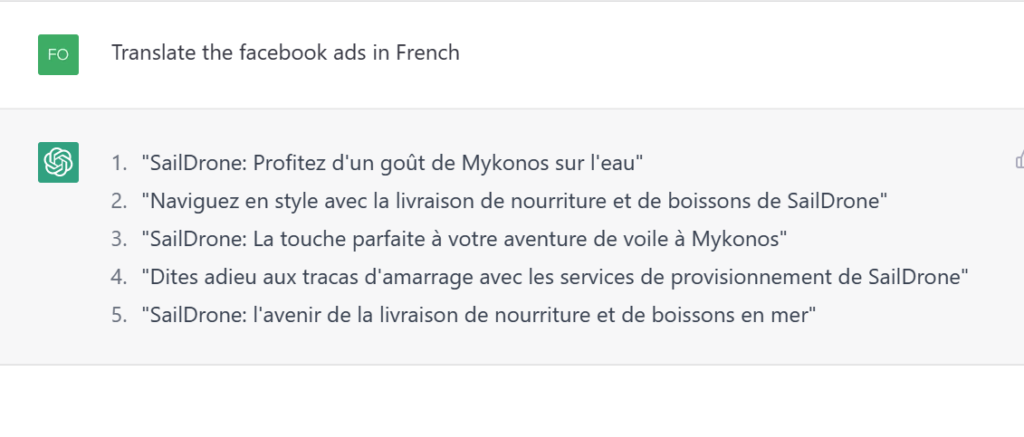

It can get creative with providing ideas for ads on social media and the internet.

And obviously, translation in French is an easy task:

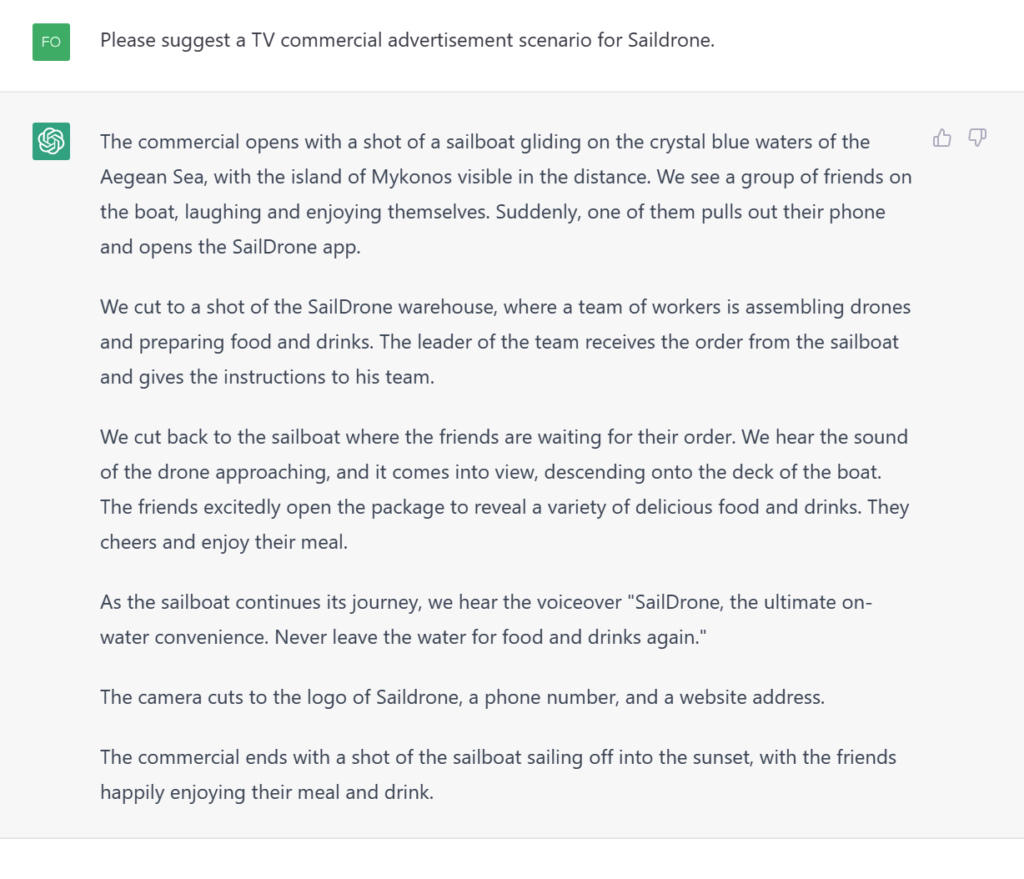

I also asked from it to suggest a TV ad scenario and here is what I got:

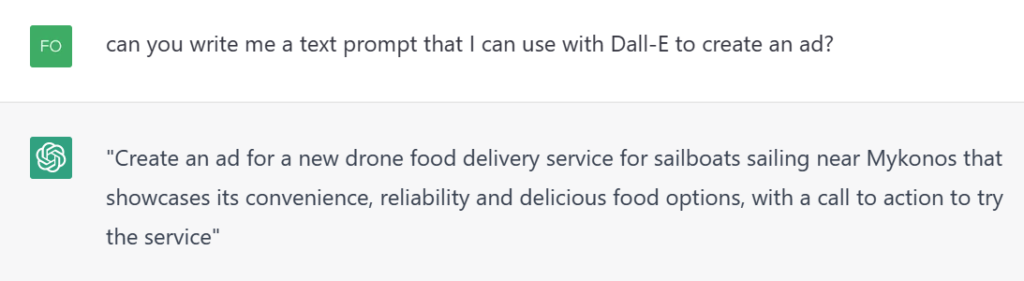

I also try to find the intersection of ChatGPT with Dalle, so I ask from ChatGPT to create a text prompt for an ad I can use with Dall-e.

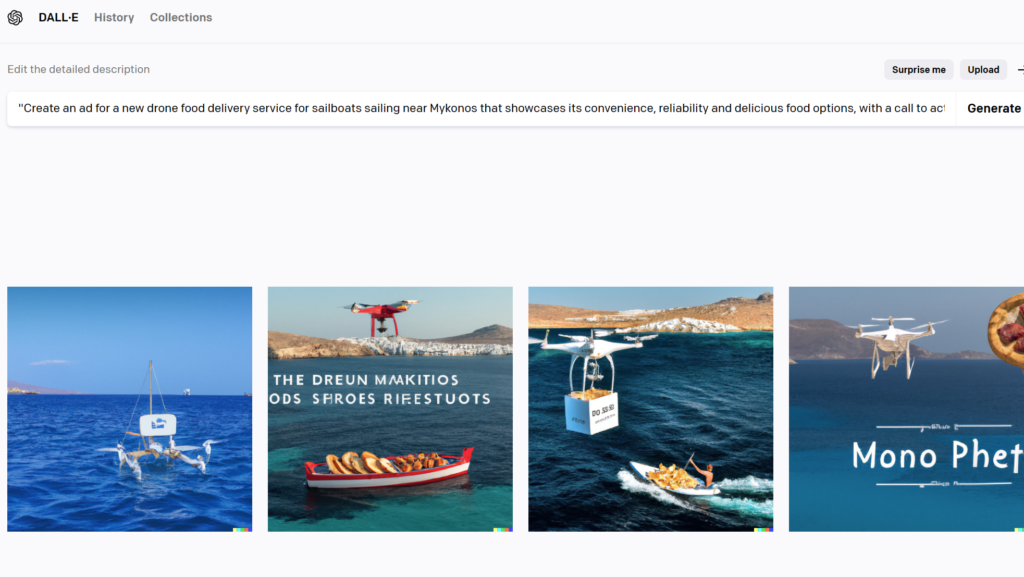

And here is what I get from Dall-e for the above prompt.

Not there yet but I am sure I can get a better result if I try a few more times.

One of the main advantages of GPT-3 and ChatGPT is that they can be fine-tuned for specific tasks and use cases. For example, a chatbot built using ChatGPT can be fine-tuned to understand and respond to specific domain-specific phrases. This allows developers to create chatbots that are tailored to their specific business or industry, providing a more personalized experience for users.

So in the above example, if you are the “Saildrone” startup, you could easily create a chatbot that takes orders or replies to customer questions, that is more targeted to the sailing tribe (e.g the specific phrases that are used to describe ports, docks, and other areas of potential delivery).

Another advantage of OpenAI and ChatGPT is that they can be used to create applications with minimal development time. Because GPT-3 and ChatGPT are pre-trained on large datasets, developers can use them as a starting point for their own applications, rather than having to train their own models from scratch. This saves a significant amount of time and resources and allows developers to focus on creating the user interface and other aspects of their applications.

So from all the above examples and discussions, we can think of a few potential use cases for OpenAI and ChatGPT (and here are more of them:https://openai.com/blog/gpt-3-apps/)

- To create chatbots for providing customer service for an e-commerce website

- For helping e-banking websites customers understand in simple terms the different terms of accounts/finance jargon etc, or navigate the website

- To answer questions of citizens in gov portals on how to do e-citizen tasks

- To summarize medical reports

- To summarize news

- To create articles, essays, blogposts, content

- To scan profiles of employees and provide feedback to HR

- To translate in friendly or business style, the content of a website

- to write replies to emails

- to write simple code that can help us build an application

- to do financial analysis and forecasting

- do sentiment analysis to the call center calls and find the VIP customers who are unhappy with your service

- Generate educational content, games, quizes, stories for children

- And many more…

The Four Language Models of GPT-3

Open AI GPT-3 is providing four different language models, called Ada, Babbage, Curie, and Davinci.

How are they different and what type of different apps can you develop?

Ada

Ada is the smallest and most cost-effective of the four models, designed for simpler language tasks such as sentiment analysis and intent classification. Ada can understand and respond to text input in a basic way, but it does not have the fine-tuning capabilities that the other models have. The pricing for Ada is per API call, making it a good option for startups who need a simple and cost-effective solution.

Use for: Parsing text, simple classification, address correction, keywords

Babbage

Babbage is the next step up from Ada, and it is designed for more complex language tasks such as language translation and text summarization.

Use for: Moderate classification, semantic search classification

Curie

Curie is the next step up from Babbage, and it is designed for even more complex language tasks such as question answering and conversation generation.

Use for: Language translation, complex classification, text sentiment, summarization

Davinci

Davinci is the most powerful of the four models and designed for the most complex natural language tasks such as creative writing, poetry, and fiction generation. It is also the most expensive of the four models.

Use for: Complex intent, cause and effect, summarization for audience

Here are some example of how to integrate each one of them in your solution:

A startup that specializes in language learning could use Ada to create a chatbot that can hold basic conversations with users in different languages, providing a simple and cost-effective solution. Babbage could be used to create a chatbot that can translate phrases and idioms for users in different languages, providing a more advanced solution. Curie could be used to create a chatbot that can answer questions about grammar and other language-specific topics. Davinci could be used to create a chatbot that can generate creative writing such as poetry.

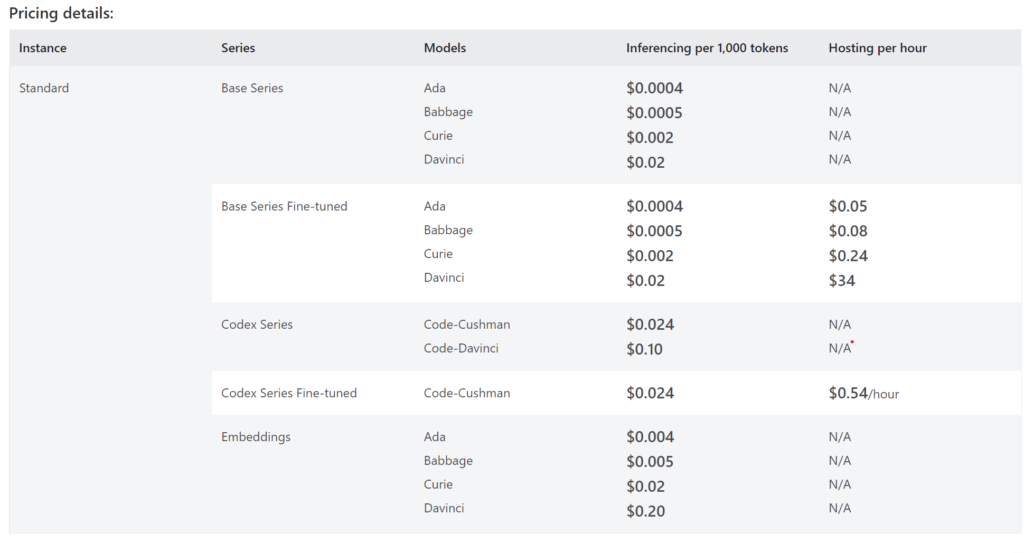

If you ask about what is the pricing for each one of them, here is the table with the pricing of the different OpenAI language models on Azure.

The Codex Models

Codex is a fine-tuned version of the fully-trained GPT-3 model. These are models that can understand and generate code. Their training data contains both natural language and billions of lines of public code from GitHub (159GB of public data repositories were used to train the model)

They’re most capable in Python and proficient in over a dozen languages, including C#, JavaScript, Go, Perl, PHP, Ruby, Swift, TypeScript, SQL, and even Shell.

Similar to GPT-3, the Davinci model is the strongest one in analyzing complicated tasks and the Cushman model is the fast one in code generation tasks (and it is cheaper than Davinci).

You can use Codex via the Gihub Copilot: https://github.com/features/copilot/https://github.com/features/copilot/

Here is the Open AI Codex demo on youtube:

If you are into reading research papers, this is the one for Codex: https://arxiv.org/abs/2107.03374 and PDF Download of the paper here. The paper says that Codex has solved close to 30% of all the problems that the scientists gave them, according to the HumanEval evaluation set they created.

Going into the potential applications of Codex, here are a few ones from /https://openai.com/blog/codex-apps/ :

- Github copilot is the most famous integration for the moment.

- Pygma aims to turn Figma designs into high-quality code.

- Replit leverages Codex to describe what a selection of code is doing in simple language so everyone can get quality explanation and learning tools. Users can highlight selections of code and click “Explain Code” to use Codex to understand its functionality.

- Machinet helps professional Java developers write quality code by using Codex to generate intelligent unit test templates.

I think, that what matters the most here to startups, is to save time from their dev team. The dev cost per hour ranges from 20 USD to even 200 USD, so every hour saved is meaningful. And Codex & Github Copilot seem to be delivering on that frontier.

OpenAI, ChatGPT and Azure: Integration, How to get Access, Advantages, Cost

Integration

Both OpenAI and ChatGPT are integrated with Azure using the OpenAI GPT-3 API. The API can be accessed via Python, Java, C#, JavaScript etc, using Azure’s Cognitive Services, which is a set of pre-built APIs for natural language processing, computer vision, and other AI-related tasks. Here is also a chatGPT for VS Code https://gpt3demo.com/apps/chatgpt-for-vscode

How to Get Access To Azure OpenAI

If you want to try OpenAI and ChatGPT on Azure, just create an Azure account and sign-up for the OpenAI GPT-E API. If you are a startup, you can get for free $2.500 USD credits to OpenAI, through applying to the Foundershub program.

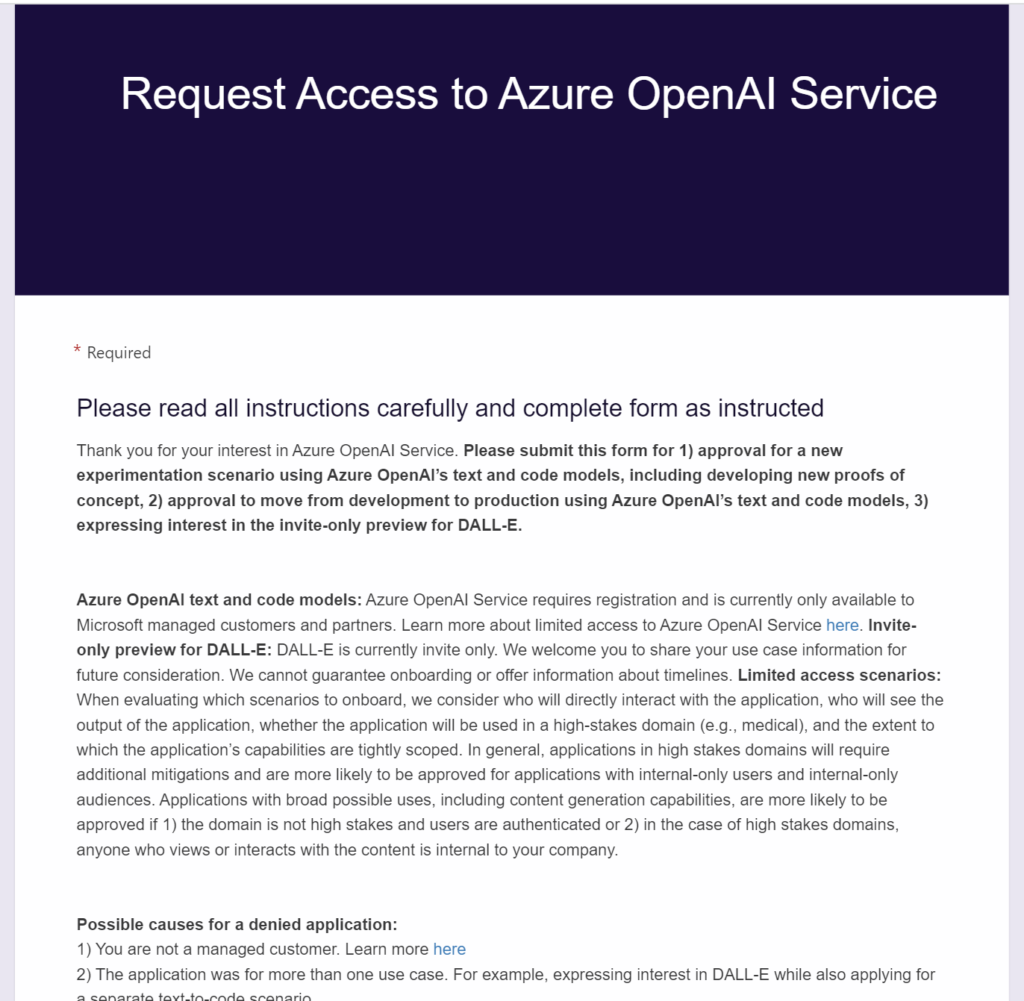

If you are not a startup, then you have to apply for access, here

Note: Microsoft announced on 16th of January the General Availability of the Azure OpenAI service:

Advantages of OpenAI on Azure

One of the main benefits of using OpenAI and ChatGPT on Azure is scalability. Because the GPT-3 model is hosted on Azure’s cloud, developers can easily scale their applications to handle a large number of requests without having to worry about managing their own infrastructure. Additionally, using Azure’s Cognitive Services allows developers to add more functionality to their applications without having to build it from scratch.

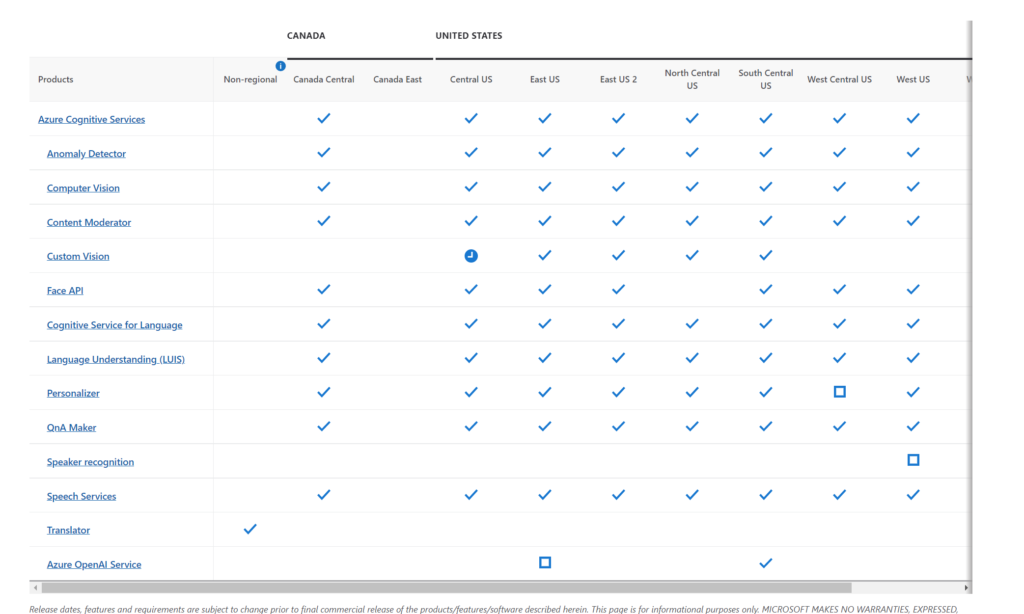

Azure Cognitive Services is a collection of pre-built APIs for natural language processing, computer vision, and other AI-related tasks. These APIs can be used to add additional functionality to applications built with OpenAI and ChatGPT, such as natural language understanding, sentiment analysis, and image recognition.

Some of the functionality of Azure Cognitive Services that developers can use include:

- Language Understanding (LUIS): This API allows developers to add natural language understanding to their applications. It can be used to identify and extract entities, intents, and other information from text input.

- Text Analytics: This API allows developers to extract insights from unstructured text data, such as sentiment analysis, key phrase extraction, and language detection.

- Speech to Text and Text to Speech: These APIs allow developers to add speech recognition and text-to-speech capabilities to their applications.

- Computer Vision: This API allows developers to analyze images and videos to extract information such as objects, faces, and text. It can be used for tasks such as image recognition, object detection, and OCR.

- Personalizer: This API allows developers to personalize the user experience by providing recommendations and content tailored to individual users.

- Anomaly Detector: This API allows developers to detect anomalies in time series data, such as network traffic or sales data.

- Translator: This API allows developers to add language translation functionality to their applications, supporting more than 60 languages.

- News Search: This API provides access to a search index of news articles, allowing developers to search for news by keyword, category, or location.

So, in simple terms, by using all these APIs, your developers don’t have to build everything from scratch and can focus on the UI, Security and other aspects of their apps. You are also getting all the benefits of a managed service (scalability, elasticity, performance, security etc)

Pricing of the Azure OpenAI service

Pricing of the OpenAI on Azure is on a pay-as-you-go model, so developers only pay for the requests they make to the API. How much does it cost? You can check the pricing details here and at the table below.

Since the API pricing is based on tokens, it’s important to understand them.

A token is a bundle of a few letters. E.g the word hamburger is 3 tokens (ham – bur – ger). The complete Shakespeare literature is 900.000 words, which is around 1.2 million tokens. So, consider that 750 words worth 1000 tokens, or a token to word ratio of 1.4.

Another pricing consideration is that, in the API Call, you get charged both for the prompt you are sending and for the predicted text.

So, if you are using the best and most expensive model of OpenAI, the “DaVinci” model, it is 2 cents for every 1000 tokens (or 750 words produced via the API calls). This can easily add up to large numbers if you are building apps that will be heavily used in terms of text by a large customer base. Make sure you take this into account when building your apps. There are startups that had to move away from OpenAI on a later stage due to costs (e.g see the Replika presentation on that here)

Below a couple of examples on how to calculate the total cost of using the OpenAI service on Azure; basically you need to factor:

- Which language models you are going to use (DaVince?Curie?Both?)

- The Tokens you will need per day

- The hours you are going to deploy the model

- The Fine-tuning cost

Let’s look into a simple example of a pricing calculation for a new solution.

Let’s suppose that you want to create an application that connects to the website of a popular e-commerce store customer feedback chatbot and to the call-center to do voice-to-text of customer feedback calls. It then analyzes the feedback and tries to classify complaints, do sentiment analysis.

Let’s assume that you have 60 calls per hour from 8:00 in the morning to 20:00, 12 hours per day, for 6 working days. And that you also have 20 customers per hour providing written feedback via the chatbot in the website, for the same 12 hours per day. Let’s assume 250 words text from each customer for both channels (website chatbot and call center)

So:

- 80 touchpoints per hour, with 250 words per touchpoint, 12 hours per day

- This is a total of 80x12x250=240.000 words per day

- 1 word is 1.4 token, so we need 240.000×1.4=336.000 tokens per day

- Using the Davinci model, costs 0.02 USD per 1000 tokens, this is 6.72 USD per day, or around 161USD for 24 days of the month (running the service from Monday to Saturday).

Now that doesn’t seem much, but the calls per hour might be 10 to 100 times higher and the same could go for the feedback gathered from the website, depending on the popularity of the store and the market it operates in.

Finally, bear in mind that for the moment the OpenAI service on Azure is availble in the South Central US region, which you may want to test for latency-related issues in your application.

Use Cases and Examples of Startups that are currently using OpenAI

Here are a few examples of use cases and startups using Open AI, for your inspiration.

Carmax: Used car retailer CarMax has used Azure OpenAI Service to help summarize 100,000 customer reviews into short descriptions that surface key takeaways for each make, model and year of vehicle in its inventory. Read about the case study here.

In the healthcare industry, GPT-3 is being used to generate medical reports and summaries. A startup called Medopad uses GPT-3 to generate medical reports from patient data, allowing doctors to spend more time with patients and less time on paperwork. Another company called Freenome uses GPT-3 to analyze genetic data and identify potential health risks, helping doctors make more informed decisions about patient care.

In the finance industry, GPT-3 is being used for financial analysis and forecasting. A startup called Blue River uses GPT-3 to analyze financial news and generate reports on market trends, helping traders make more informed decisions. Another company, called Alpaca uses GPT-3 to analyze financial data and generate trading strategies, helping traders identify profitable opportunities in the market.

In the e-commerce industry, GPT-3 is being used to generate product descriptions, improve search results and create chatbots that can answer customer questions. A company called Verbling uses GPT-3 to generate product descriptions for their language learning platform and another company called, Sift Science uses GPT-3 to improve search results on their e-commerce platform.

The next four are part of the OpenAI funded startups:

Descript is a powerful video editor reshaping the way creators engage with content by using AI to make video editing as simple as editing a text document.

Harvey is developing an intuitive interface for all legal workflows through powerful generative language models. Its technology expands a lawyer’s capabilities by leveraging AI to make tedious tasks such as research, drafting, analysis, and communication easier and more efficient. This saves lawyers time, ultimately allowing them to deliver a higher quality service to more clients.

Mem is building the world’s first self-organizing workspace. Starting with personal notes, Mem uses advanced AI to organize, make sense of, and predict which information will be most relevant to a user at any given moment or in any given context. Mem’s mission is to build products that inspire humans to create more, think better, and spend less time searching and organizing.

Speak is on a mission to help more people become fluent in new languages, starting with English. The company initially launched in East Asia with a focus on South Korea, and has nearly 100,000 paying subscribers. Speak is creating an AI tutor that can have open-ended conversations with learners on any number of topics, providing real-time feedback on pronunciation, grammar, vocabulary, and more.

There are more apps for your inspiration at https://openai.com/blog/gpt-3-apps/ and as more companies discover the benefits of using OpenAI and ChatGPT on Azure, we could expect to see even more innovative use cases and success stories in the future. You can also have a look at https://gpt3demo.com/ and https://gptcrush.com/ for a list with apps that use GPT-3

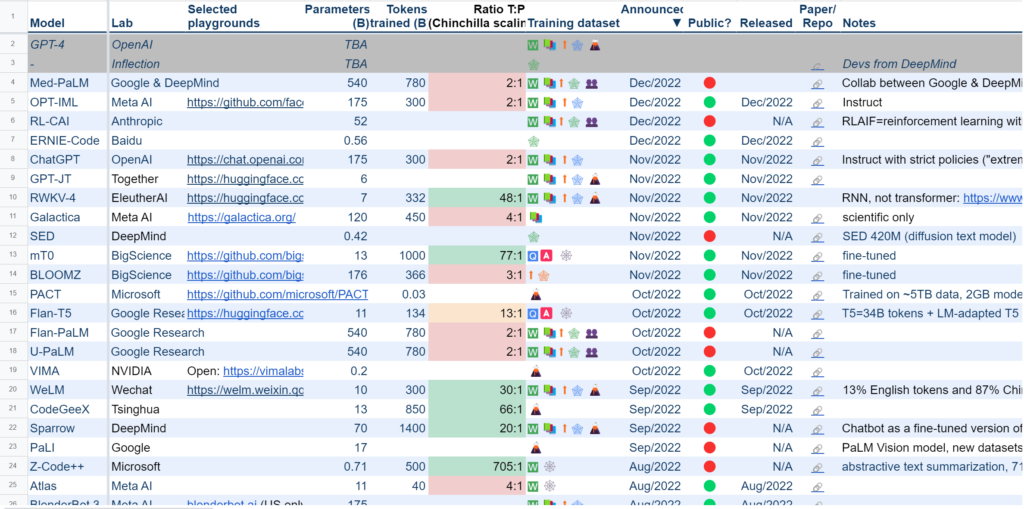

Open AI Competition

Here are the main competitive solutions to the Open AI GPT-3 one:

- Anthropic AI: Its language model performance seem to be on the top 3 at the moment

- Google: Google has several AI models, such as BERT, T5 , which are similar to Open AI’s GPT-3

- Microsoft: Microsoft has the Azure Cognitive Services, which include LUIS (Language Understanding) and Text Analytics

- Amazon: Amazon has Amazon Lex and Amazon Transcribe which are similar to Open AI’s GPT-3

- Facebook: Facebook has an AI platform which includes RoBERTa, which is similar to Open AI’s GPT-3

- IBM: IBM watson is the relevant solution here, which offers natural language processing, computer vision and other tasks

- Baidu: Baidu is a Chinese company with several research teams on AI, and the model similar to Open AI’s GPT-3 is called ERNIE.

A summary of all the language models can be found here and an assessment of language models from Stanford university can be found here

Limitations of OpenAI & ChatGPT

OpenAI and ChatGPT are powerful AI tools, but like any technology, they have some limitations. Some of the main limitations of OpenAI and ChatGPT include:

1. Data bias: OpenAI and ChatGPT are trained on large datasets of internet text, which can introduce bias into the model’s predictions and outputs. This can be particularly problematic for sensitive applications, such as healthcare or financial analysis.

For example, if a chatbot that uses GPT-3 is trained on a dataset that is mostly written by men, it may not be able to understand or respond well to text written by women, or it may generate responses that are insensitive or offensive to women. Another example of bias could be in a language model that is trained on a dataset that is mostly written in English, it may not be able to understand or respond well to text written in other languages, or it may generate responses that are not accurate or appropriate for that language.

2. Lack of control: OpenAI and ChatGPT are pre-trained models, which means that users have limited control over the specific parameters and settings of the model. This can make it difficult for users to fine-tune the model for specific use cases.

For example, a startup that wants to create a chatbot that can hold natural conversations with users in a specific industry, such as finance, may not be able to fine-tune the model to understand and respond to specific industry-specific phrases and jargon. This can make it difficult for the chatbot to hold natural conversations with users in that industry and make the experience less personalized for the users.

Another example could be if a startup wants to create a language model that can generate text in a specific tone or style, such as a formal tone, it may not be able to fine-tune the model to generate text in that specific tone. This can make it difficult for the model to generate text that is appropriate for the desired use case.

It’s important to note that OpenAI has released a number of tools and guidelines for fine-tuning GPT-3 for specific use cases, such as GPT-3 fine-tune, which allows developers to fine-tune the model on their own dataset, making the model more suitable for their specific task. Additionally, OpenAI also offers several options for controlling the temperature and other parameters of the model to make it more suitable for specific use cases.

3. Limited interpretability: OpenAI and ChatGPT are neural networks, which means that they can be difficult to interpret and understand. This can make it difficult for users to understand how the model is making its predictions and to identify and correct errors.

4.High cost: OpenAI and ChatGPT are commercial products, which means that they can be quite expensive to use, depending ofcourse on the use case. This can be a barrier for some startups and small businesses that may not have the budget to use them.

5. History of Data Training: The model is trained with data until 2021, so it misses a couple of years of new events and information.

6. Prompt Size: The OpenAI maximum length of text prompt size is 2048 tokens which is around 1500 words.

Resources and Further Reading

- OpenAI’s documentation: https://beta.openai.com/docs

- Azure’s documentation: https://docs.microsoft.com/en-us/azure/cognitive-services/ and https://learn.microsoft.com/en-us/azure/cognitive-services/openai/overview

- Community forums: OpenAI API Community Forum / and Azure Cognitive Services Forums

- Webinars and training sessions: https://openai.com/blog/tags/events/ and https://azure.com/events

- Partner program:https://partnershiponai.org/partner/openai/

- Blogs and articles: https://beta.openai.com/blog/ and https://azure.com/blog

- Case studies: https://openai.com/blog/gpt-3-apps/